Just like most VMware specialists I follow Duncan Epping’s Yellow-Bricks blog and am always impressed with the quality of the writing and technical content. This week there was a guest post by Craig Risinger about the unexpected effect of using only shares to arbitrate between resource pools. Craig’s pictures tell a thousand words on the effect of a large population of VMs in a large Resource pool vs. a small population in a large pool.

The problem is that while shares are simple to configure they’re the wrong tool. Fundamentally shares guarantee nothing. The correct tool to guarantee resources to VMs is a reservation. Enough resource should be reserved for a VM to allow it to provide acceptable performance. The other guarantee is a limit,we guarantee not to provide more resources than the limit. Shares should be used to divide up the resources left over after VMs have taken what they need. Remember that shares only apply if there isn’t enough resource to satisfy all requirements.

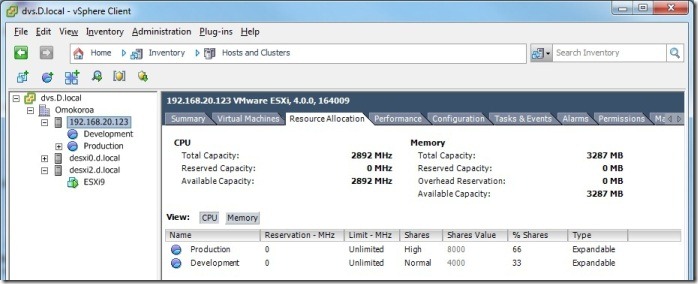

Lets look at the simplest case that illustrates Craig’s point, a Prod resource pool with 8000 shares containing three VMs and a Dev resource pool with 4000 shares containing only one VM. All four VMs are identical and attempt to consume as much CPU time as possible, they are Windows XP and running the cpubusy.vbs script we use in the VMware courses. The script is modified to do more sines before reporting time to make time differences more visible. My test ESX server is an HP BL460c with two Quad core 1.8G66 Hz CPUs running ESXi V4.0 with Update 1.

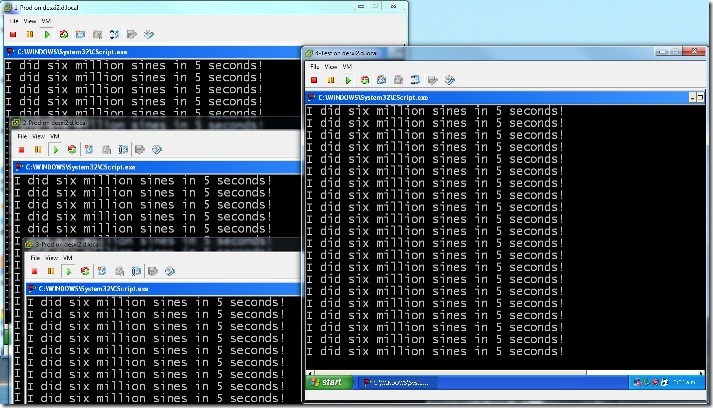

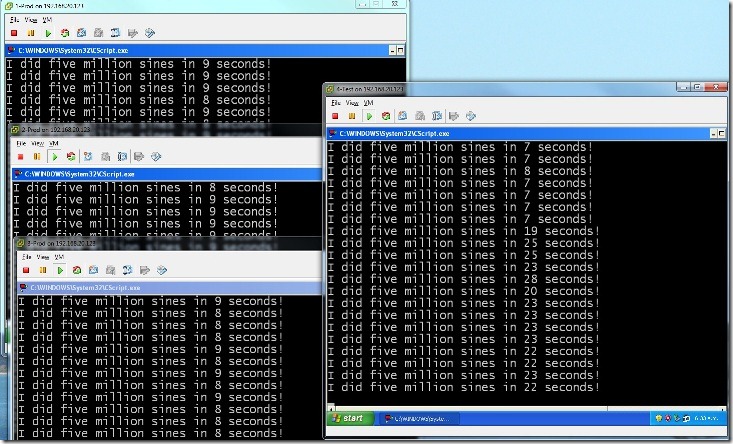

Test 1: Shares only

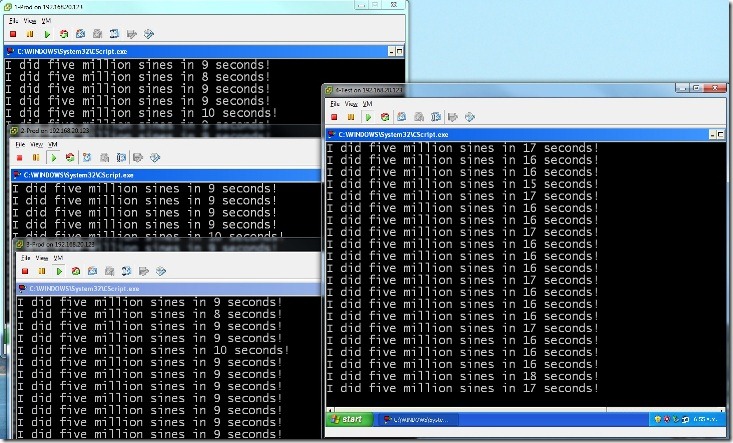

In this test all four VMs run in their resource pools with access to the whole ESX server, consequently each VM got a core to itself, this is the situation of ample resource for the requirements and is as fast as the system will run, 5 seconds. As there are ample resources shares are irrelevant. The three Production VMs are stacked up on the left side and the one Test VM is on the right.

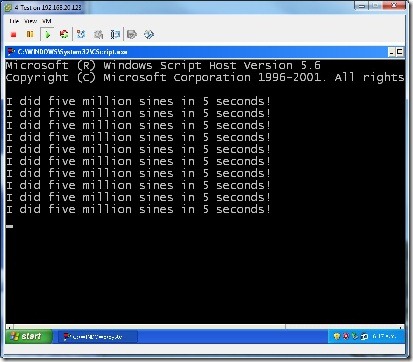

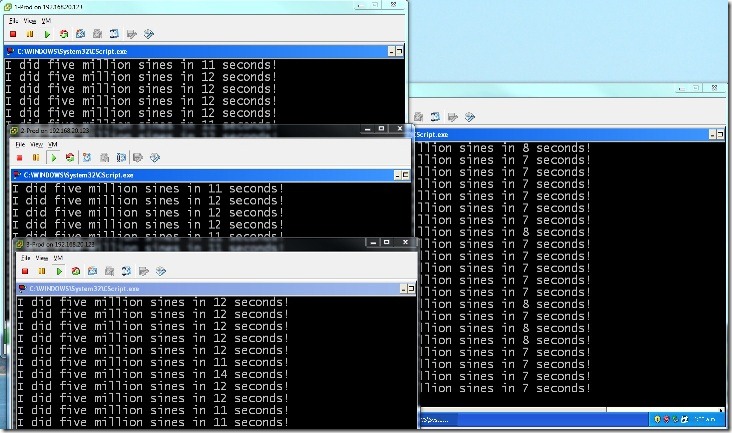

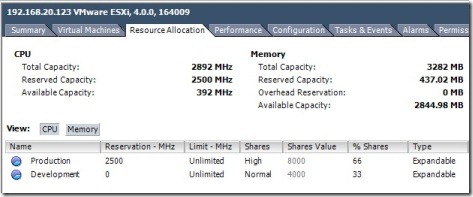

Test2: Shares and restricted CPU

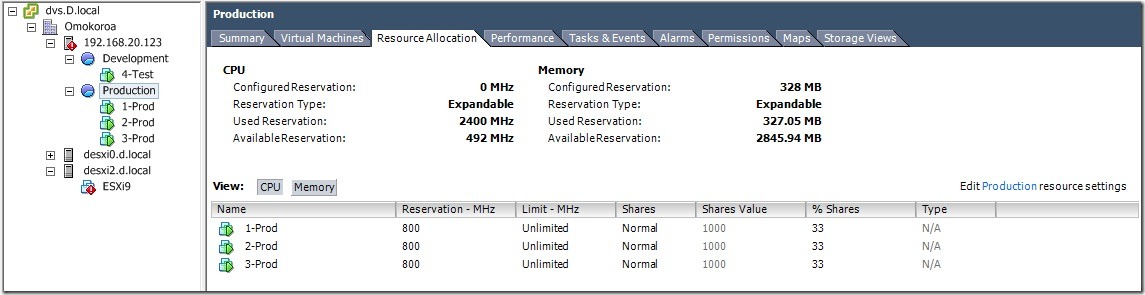

In this test the four VMs must compete for just two CPUs in the ESX server. This is done using a 2 CPU ESX server as a VM on my 8 CPU host, I tried to do this with CPU affinity and will blog about that in another post. For information about ESXi as a VM on ESX take a look at my post from last year. In order to normalise my results compared to test 1 I had to reduce the work unit to five million sines for the VM running inside an ESXi host, which itself was a VM on my main host. The resource pools can be seen in the second picture below, along with the VM ESXi9 which is the nested ESX server, itself being the ESX host 192.168.20.123 which hosts the resource pools.

As we expected the three VMs vying for the Production resource Pool take longer to complete their task (12 seconds) than the one VM in the Test resource pool (7 seconds). This is the issue at the centre of The Resource Pool Priority-Pie Paradox that Craig wrote about. High shares on a resource pool don’t guarantee a lot of resource to the individual VMs in that resource pool.

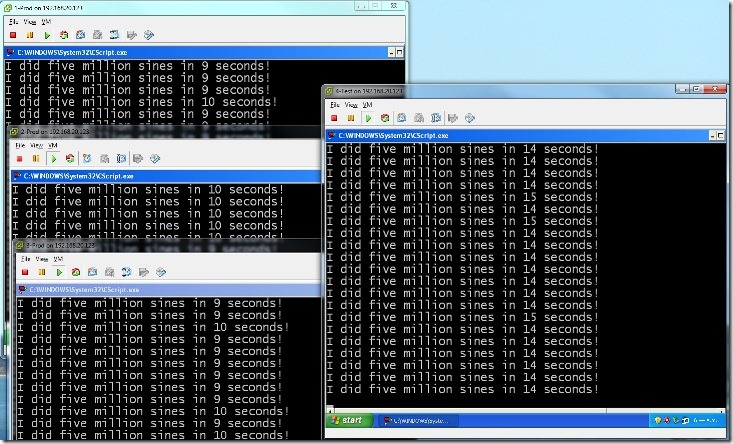

Test 3: Resource Pool Reservations

I was in the ANZ VMLive Webex yesterday where Mike Bookey from VMware’s Sydney office advised setting reservations on resource pools as a way to guarantee resources to VMs, I’d never given the idea any thought but it would be easier than setting reservations on every VM. So in this test I reserved 2.5GHz for the production resource pool, this is slightly less CPU time than the two cores can deliver. As can be seen in the picture this was immediately effective at delivering more CPU resources to the three production VMs (8 seconds to complete), at the expense of the Test VM (22 seconds).

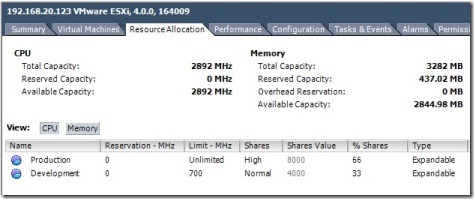

Test 4: Resource Pool Limits

In this test I removed the Reservation on Production and instead applied a limit of 700MHz to the Test resource pool. This delivered less CPU to the Test VM than the base resource pools, but in this case more than the reservation allowed in Test 3. A limit can be a good way to cap resource demands, but it doesn’t guarantee anything to another VM, and it can artificially constrain performance of VMs in the limited resource pool.

Test 5: VM Reservations

In this test I removed the limit from the Test Resource Pool and applied a 800 MHz reservation to each VM in the Production resource pool

This also resulted in resources being delivered more to the Production VMs than the test VM.

Conclusion

Reservations are your best tool to deliver guaranteed resource to VMs. Reservations can be set on a resource pool to guarantee resources to the child objects or on individual VMs. As with most things setting values on container objects is operationally simpler than setting values on every object within the container, but sacrifices granularity. Naturally nothing prevents you setting reservations on a resource pool and again at the VM level for highly critical VMs.

As with any tool the result produced depends on the care in it’s use, your reservations will not be static unless your VM population and their workload is also static. Ongoing monitoring and planning is essential in any shared resource environment.

Good use of reservations have the side effect of giving visibility to the potential loading of your environment, as resource is judiciously reserved the unreserved resource shows the available capacity of your ESX server or DRS cluster.

© 2010, Alastair. All rights reserved.

RSS - Posts

RSS - Posts

Good post Alastair and thanks for your clear explanation of the differences on the APAC Virtualisation podcast #5 tonight.

I have a question about reservation at pool level. As in Test #3, if you reserve 2.5GHz to the production pool, what does it mean to the VM’s in that pool? Does it share the 2.5GHz equally? (~800MHz per VM)

Is that why you used 800MHz when configuring reservation at VM level in Test #5?

If this is the case.. wouldn’t reservation at Pool level eventually also suffer from the “pie paradox” mentinoed in the yellow-bricks blog?

Daniel,

You are quite right, a resource pool with reservation can be over occupied just as easily as one with just shaes.

I guess the solution is that part of the VM provisioning process needs to be allocation of reservation, either to the VM or to the resource pool it will be placed in.